The US Financial Services AI Risk Management Framework (FS AI RMF)

The FS AI RMF is an industry-led, sector-specific AI risk management framework developed through public-private collaboration - including the Cyber Risk Institute (CRI), 108 financial institutions, the US Treasury, and the National Institute of Standards and Technology (NIST).

At a time where AI deployment is acclerating, it provides an operationalisation of the NIST AI RMF specifically tailored for financial services, reflecting the sector’s unique use cases, complexities and regulatory expectations.

It consists of:

- AI Adoption Stage Questionnaire: A self-assessment to help organizations classify their current AI adoption level.

- Risk and Control Matrix (RCM): A list of 230 Control Objectives that can be filtered by adoption stage, type of control function, and the AI principle it aims to satisfy.

- A Guidebook: A manual on how to implement the FS AI RMF, including how to utilise the following resources.

- Control Objective Reference Guide: Illustrative examples of what good controls and effective evidence look like.

All of the resources can be downloaded here.

What we can take away from the FS AI RMF

The real value of the FS AI RMF is that it turns AI governance from a set of principles into a practical toolkit that teams can apply, supporting financial institutions operating in the US at any stage of their AI journey.

Three features in particular set it apart:

1. Scalable and proportionate governance

Unlike existing AI guidance, the FS AI RMF does not assume firms are already mature in their deployment.

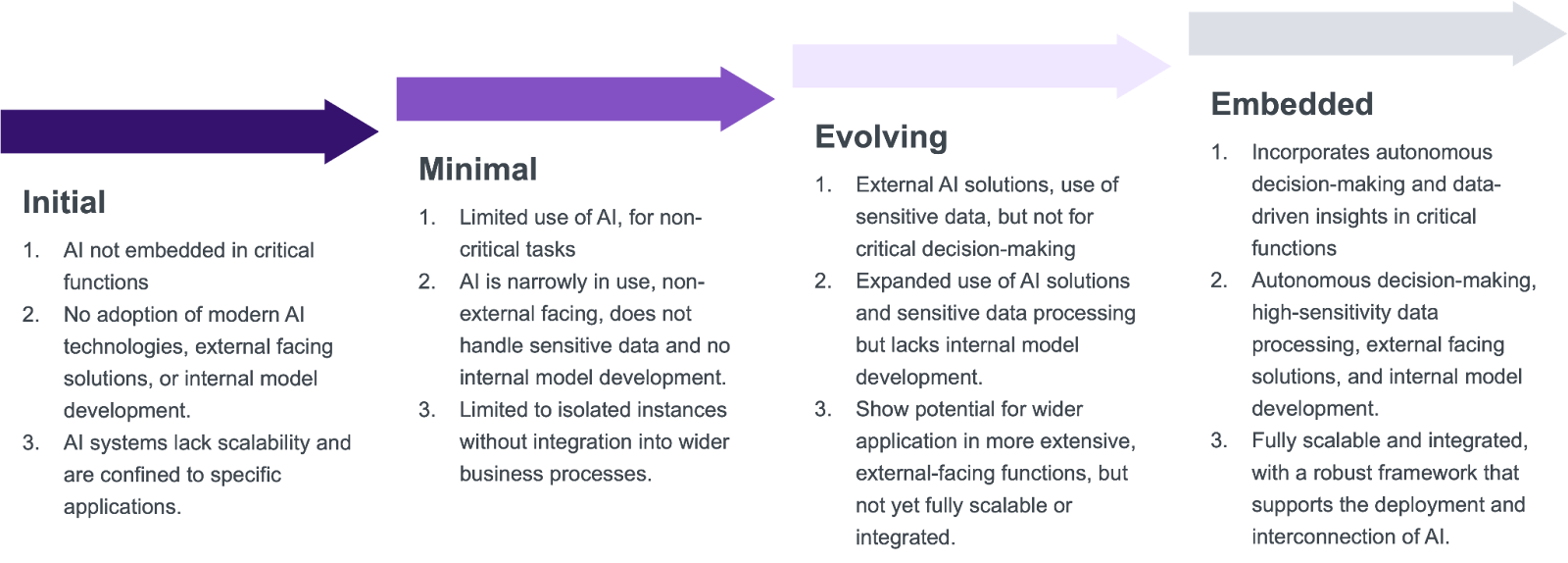

The AI Adoption Stage Questionnaire categorises firms into four distinct Adoption Stages (Initial, Minimal, Evolving, and Embedded), by considering three factors: 1) the business impact of AI, 2) the technology implementation, and 3) scalability.

- Right-sizing governance: This allows teams to focus on what’s relevant and avoid heavy duty controls for early pilots with low risks involved.

- Governance that scales: Firms can start adopting AI early on, build momentum, and then progressively strengthen oversight as models move into production and become business-critical workflows.

2. Actionable controls: moving from principles to practice

Principles alone do not tell firms how to implement AI controls. Organisations today understand that AI should be "transparent" or "fair," but it’s often unclear what risk and compliance teams need to do in practice, or what internal audit should test to verify them.

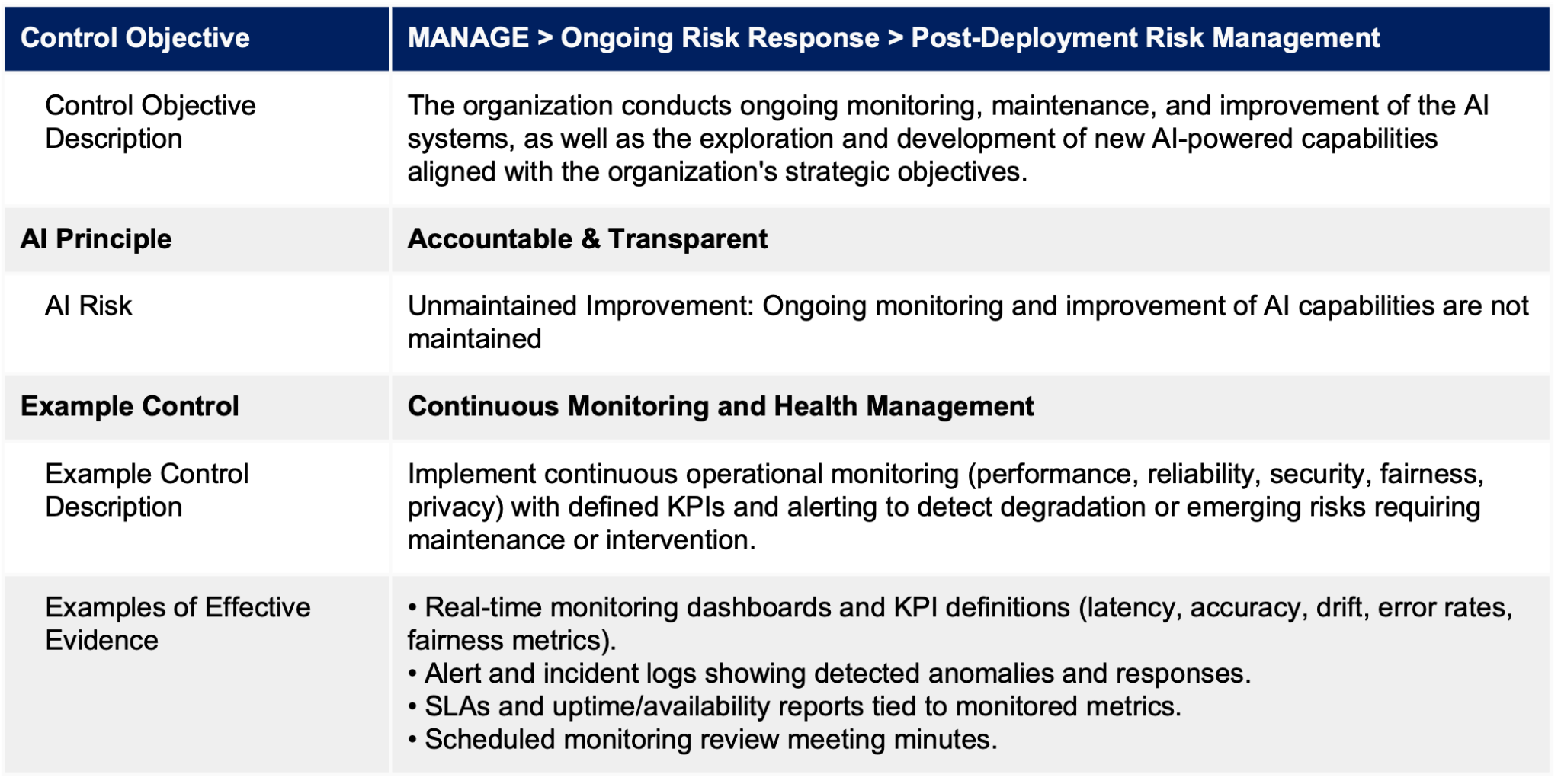

- Practical control recommendations: The FS AI RMF translates broad AI principles into specific, auditable controls, supported by examples and guidance on what “good” looks like.

- Mapped to AI risks: Each Control is designed to address one of the NIST’s AI Trustworthy Principles (for example, safe, accountable & transparent), which firms can then map into their risk taxonomies.

- Example: The table below illustrates the level of detail provided for a typical Control Objective, including the associated AI risk, a recommended control and examples of effective evidence.

3. A “Universal Complement”: preventing governance silos

Crucially, the FS AI RMF is designed to integrate into existing risk architectures rather than create parallel AI governance structures.

The FS AI RMF describes itself as a "universal complement", intending to plug into existing enterprise GRC structures across operational resilience, cybersecurity, third-party risk, model risk management, change management, and incident response.

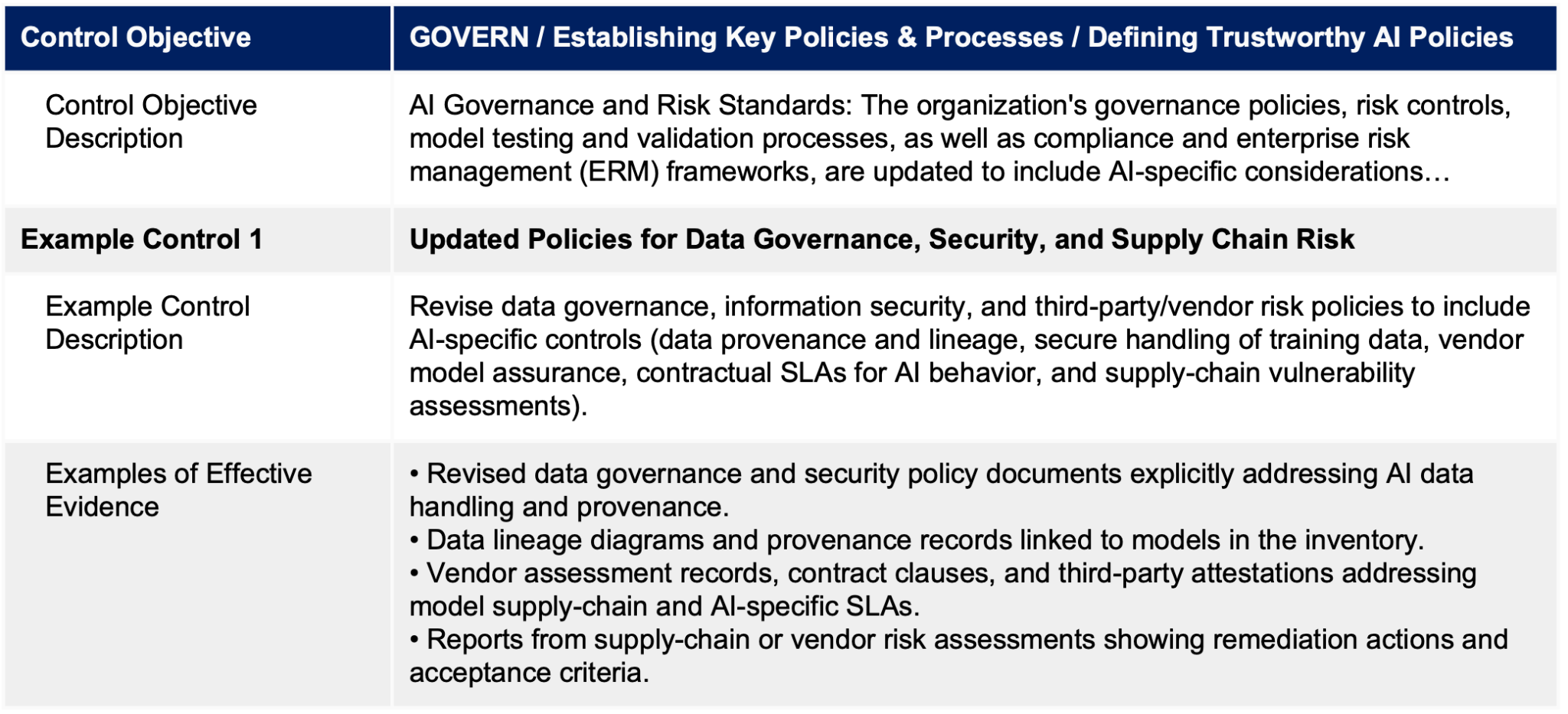

- No duplication: It helps firms refine their existing set-up, without duplicated controls, allowing accelerating implementation by embedding AI risk into current GRC structures.

- Defined ownership mapping: Recommendations outline how to integrate AI governance into policies, frameworks and SOPs owned by existing teams.

- Example: Rather than creating new standalone policies, it shows how to update existing data governance and security policies to cover AI-specific risks.

Our research: The Future of AI Governance & Compliance in Financial Services

The publication of the FS AI RMF represents a divergence: the US financial services industry now has a structured AI control framework. The UK does not.

An emerging theme in our ongoing research, The Future of AI Governance & Compliance in Financial Services, is that financial service companies require sector-specific, operational AI governance frameworks - not just high-level principles.

As AI adoption accelerates, it is critical that industry articulates - in practical terms - what robust AI governance looks like in the UK context.

Our research is being developed through collaborative efforts with independent researchers from the University of Oxford and the University of Glasgow, alongside senior compliance and risk leaders from leading financial institutions.

Click below to read more about our research and stay tuned for updates.

.png)