Key takeaways

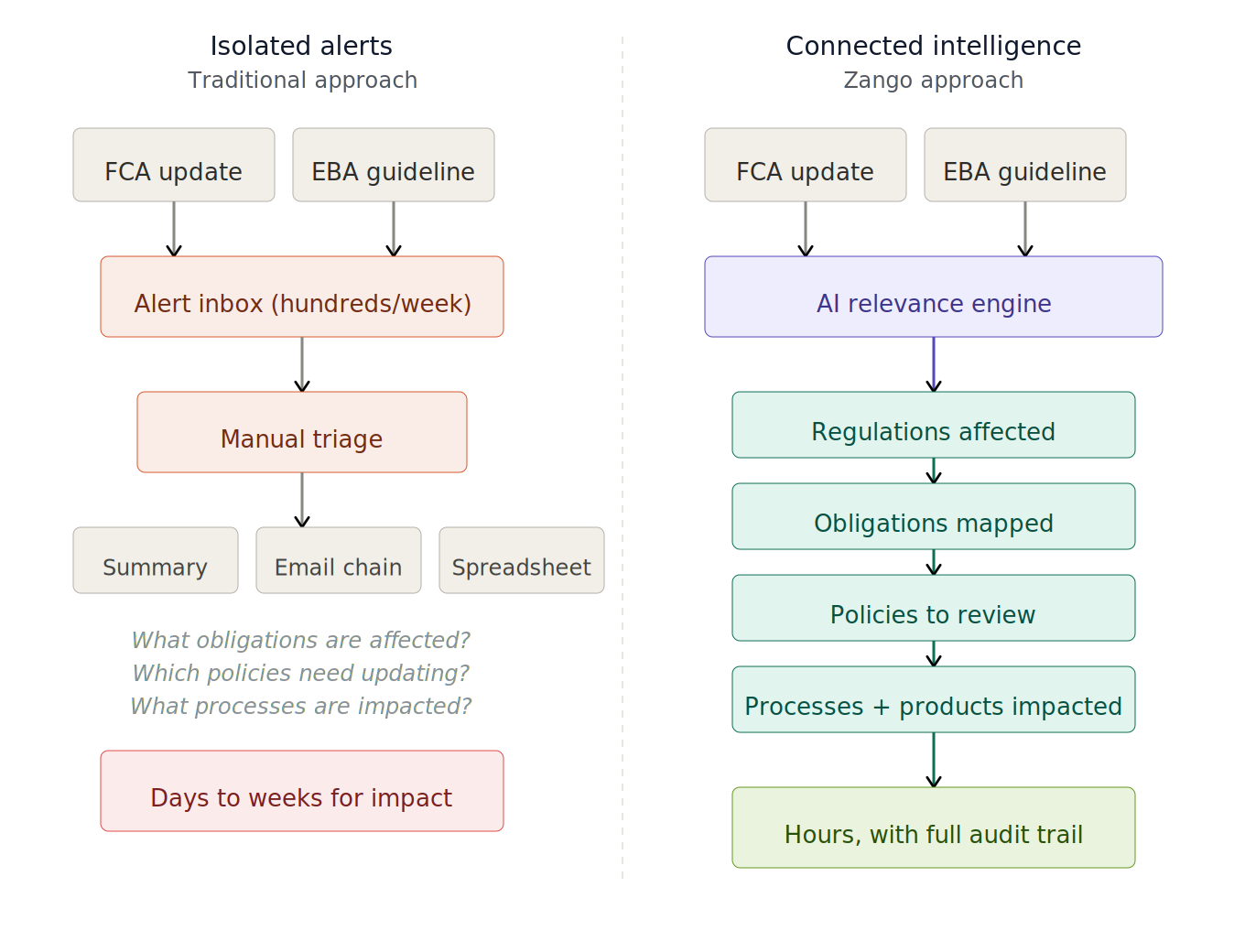

- Regulatory horizon scanning has become a strategic governance function, but most teams are still stuck processing isolated alerts that lead nowhere useful.

- Noise is an issue. But the real gap is that alerts sit disconnected from the obligations, policies, processes, and products they actually affect.

- AI can now go beyond noise filtering. It can trace the impact of a regulatory change through an institution's full compliance chain, turning scanning into something actionable.

- Zango connects horizon scanning to the downstream compliance framework, so teams see what changed and what needs to change in response.

The reality of horizon scanning today

Regulatory horizon scanning, at its simplest, means keeping a structured watch on what regulators, legislators, and supervisory bodies are publishing, and working out whether any of it matters to your institution. It covers everything from formal rule changes and consultation papers to thematic reviews and informal supervisory expectations. And for compliance teams at banks, insurers, and asset managers, it is a fairly time-consuming activity that still runs largely on manual effort.

The sheer volume is part of the story. The FCA alone published over 600 documents in 2025, spanning policy statements, consultation papers, finalised guidance, and dear CEO letters. The EBA added more than 200. Then you have national transpositions across EU member states (each with their own quirks), ESMA opinions, informal supervisory letters, and those conference speeches where a regulator drops a hint about future enforcement direction that sends compliance teams scrambling to interpret what was actually meant. Add it all up and you are easily north of a thousand items per year that someone needs to review.

Most compliance teams deal with this through a mix of regulatory news services, email alerts from law firms, internal spreadsheet trackers, and a standing meeting where someone summarises what came in that week. It works. It has worked for years. But manual work is not the best use of a compliance officer's time and in 2026 there is a much better way of solving this problem .

The real problem with horizon scanning today

When people talk about the challenge of horizon scanning, the conversation usually centres on volume. Too many alerts, too much noise, not enough time. And that is a real issue. But the real issue is the disconnect between horizon scanning and how it impacts the business and what needs to change.

The deeper problem is structural. In most institutions, horizon scanning operates as a standalone activity. A regulatory update comes in. Someone reads it, decides whether it is relevant, writes a summary, and sends it to the relevant people.What this process does not do, almost anywhere, is connect that alert to the rest of the compliance framework.

Thinking about what happens when the EBA publishes revised guidelines on outsourcing arrangements: the scanning process flags it, summarises the changes, and circulates it. But which of your existing obligations does this actually affect? Which internal policies will need updating as a result? Which operational processes are implicated? And do any of your products or services carry exposure to this particular change?

That chain, from alert to obligation to policy to process to product, is where the actual compliance work lives. In most organisations, building that chain is a manual exercise. It takes days, sometimes weeks. It depends heavily on the institutional knowledge of people who are very familiar with the operations of the institution. This is the gap. Getting alerts faster does not close it. Getting fewer, more relevant alerts helps, but does not close it either. The gap is between knowing something has changed and understanding what that change means for your specific institution.

Isolated alerts vs. connected intelligence

What AI has made possible (and what it has not!)

The first wave of AI in horizon scanning was really about ingestion. Scrapers, keyword filters, basic NLP to categorise alerts. It was useful, in that it reduced the volume of irrelevant noise reaching analysts. But it did not help with interpretation or impact assessment. Teams still had to do all the thinking themselves.

Large language models shifted things. AI can now genuinely read regulatory text, not just match keywords. It can work through a 200-page consultation paper and pull out the sections that represent material changes versus editorial tidying. It can produce plain-language summaries that a non-specialist can act on without having to wade through the original. That matters.

But the shift that matters more, and that most people are not yet talking about, is in what AI can connect.

If an AI system has access to an institution’s obligations register, its internal policy library, its process documentation, and its product taxonomy, it can do something fundamentally different from anything that came before. It can trace a regulatory change through the full compliance chain and tell you: this is what changed, these are the obligations it touches, these are the policies that are now out of alignment, and these processes need review.

The difference is between receiving a notification that says “the FCA updated its guidance on financial promotions“ and receiving an intelligence brief that says “this update affects three obligations under COBS 4, your social media marketing policy is now misaligned, and the approval process for your savings product campaign needs revising before Q3 sign-off.“

One creates a to-do item. The other creates a structured response plan.

Yet limitations remain. For AI to reason about regulatory relevance rather than just pattern-match against it, the system must be trained on institutional context, learning through feedback what is material, what constitutes a genuine gap, and what falls within acceptable tolerance.

The second constraint is worth stating plainly: large language models hallucinate. They produce confident, coherent, and occasionally wrong outputs. Human review is therefore not a workaround for an immature technology. It is a structural feature of any responsible deployment. Compliance officers are not checking AI's work because it cannot be trusted at all. They are checking it because consequential judgements about regulatory exposure require human accountability.

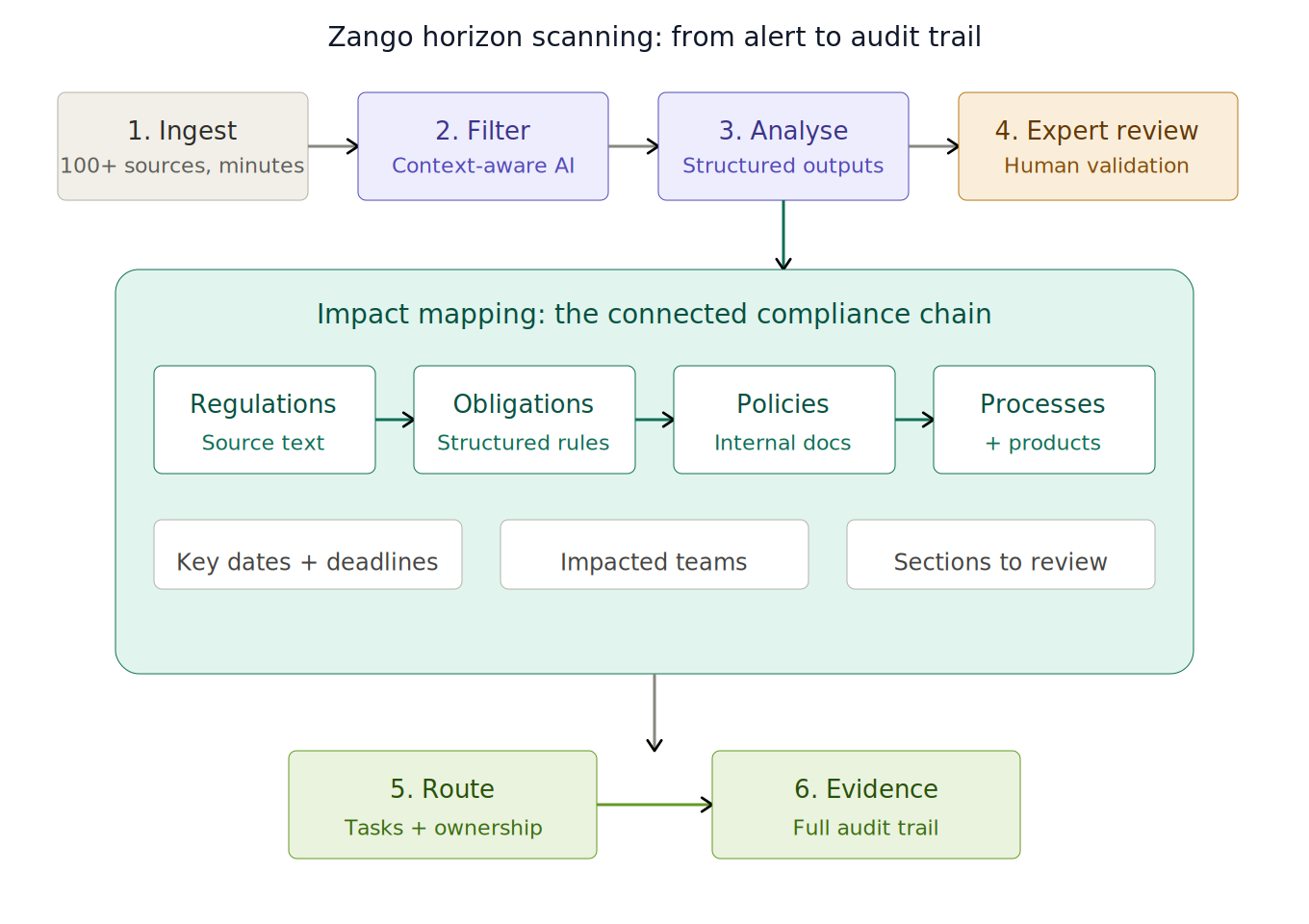

How Zango approaches this

Zango monitors over 500 regulatory sources across FCA, PRA, EBA, SEC, FINRA, MAS, and others, picking up updates within minutes of publication. But monitoring on its own is not the hardest part. What makes the platform different is what happens after the alert arrives.

Context-aware relevance filtering. Every institution using Zango has its jurisdiction, business model, product set, risk appetite and organisational structure configured in the system. When an update comes in, the AI assesses relevance against that specific institutional profile. Not against generic keywords or broad sector tags. The result is fewer alerts, and the ones that actually matter.

The connected compliance chain. This is the core of it. Each alert is linked back to the institution's obligations register. When a change affects an existing obligation, Zango identifies the downstream policies, controls, and processes that implement it. The compliance team does not just see "EBA published new outsourcing guidelines." They see: these four obligations are affected, these two policies need review, and here are the specific sections within each policy that may need revising.

Structured outputs, not summaries. Traditional scanning produces a written summary. Someone then has to read it, interpret it, and figure out what to do. Zango produces structured impact assessments: key dates and deadlines, affected regulatory domains, impacted business units, specific policy sections flagged for review, and tasks routed to the right owners. The output is a work plan, not a document to be filed.

Human expert validation. No AI output that a client will act on goes out without a compliance SME reviewing it first. We made this a hard architectural constraint from the start, not something you toggle on in settings. The AI is good at working through volume and spotting connections quickly. But regulatory interpretation has grey areas, and that is where experienced humans earn their keep.

Audit trail from day one. Everything is logged. The alert, the classification decision, the routing, the actions taken afterwards, all with timestamps and who did what. Sounds basic, but try producing that trail from your current process when a regulator comes asking about a change you handled eight months ago. Most teams end up piecing it together from Outlook and SharePoint.

Zango horizon scanning: from alert to audit trail

What this looks like in practice

If we take a real scenario here - the EBA puts out updated guidelines on ICT and security risk management under DORA. With a traditional setup, that lands as an email alert, probably from a law firm newsletter. It sits in a compliance inbox alongside fifty other things. A few days later someone reads it, writes up a summary, and begins the slow process of working out what it means for the institution. Maybe they send a few emails to find out who owns the relevant policies. Maybe they set up a meeting for next week.

With Zango, the update gets picked up within minutes of publication. The AI cross-references it against the institution’s profile: EU-regulated bank, uses third-party cloud hosting, has outsourcing arrangements. Flags it as high-priority.

From there, it identifies the obligations in the register that are directly affected. It maps those obligations to the internal policies that implement them, flagging specific sections that may need revision. It generates tasks with deadlines pulled from the regulatory implementation timeline, assigned to the relevant policy owners. The Head of Operational Resilience gets a brief with the full chain laid out: what changed, what it affects, and what needs to happen next.

The whole sequence, from publication to task assignment, is captured with timestamps. If a supervisor asks about it six months later, the evidence trail is already there.

What changes is not just speed, though going from days to hours matters. It is that the compliance team starts from a structured impact assessment rather than a blank page. They are reviewing and validating, not building the analysis from scratch. What could very well happen is after looking at the impact of the guidelines, institutions may decide to bring in an expert to implement the changes/controls - but it helps make an informed decision.

Three questions worth asking

If you are thinking about whether your horizon scanning setup is fit for purpose, three questions tend to cut through the noise.

The most revealing test is a simple one: when a regulatory update comes in, how long until someone in your team can say, with confidence, what it actually means for your obligations and your policies? Not just “here is a summary of what the regulator said,“ but “here is what we need to change and by when.“ If that takes your team a week, no amount of faster alert delivery solves the problem. The bottleneck is downstream.

Then there is the audit question. If someone from the board calls tomorrow and asks how you handled a specific regulatory change from last year. Can you pull up a clean, timestamped record of the whole chain, from identification through to remediation? Or does someone need to go digging through email threads and calendar invites to reconstruct what happened? I have sat in enough compliance meetings to know which scenario is more common.

And the one that really gets to the heart of it: does your scanning operation produce intelligence, or does it just produce reading material? There is a world of difference between the two. Reading material gets filed. Intelligence gets acted on. Which one your team is producing says a lot about whether compliance is a strategic function or an administrative one.

Zango is the AI compliance layer for financial institutions. The platform connects regulatory monitoring to obligations, policies, and audit-ready evidence across FCA, PRA, EBA, and 500+ other regulatory sources. To see how connected horizon scanning works for your institution, speak with our team.

.jpg)