Key takeaways

- The FCA intervened on 573 non-compliant promotions in 2021. By 2024, that figure was 19,766. Most compliance teams are running the same workflow they had when the number was under a thousand, and it shows.

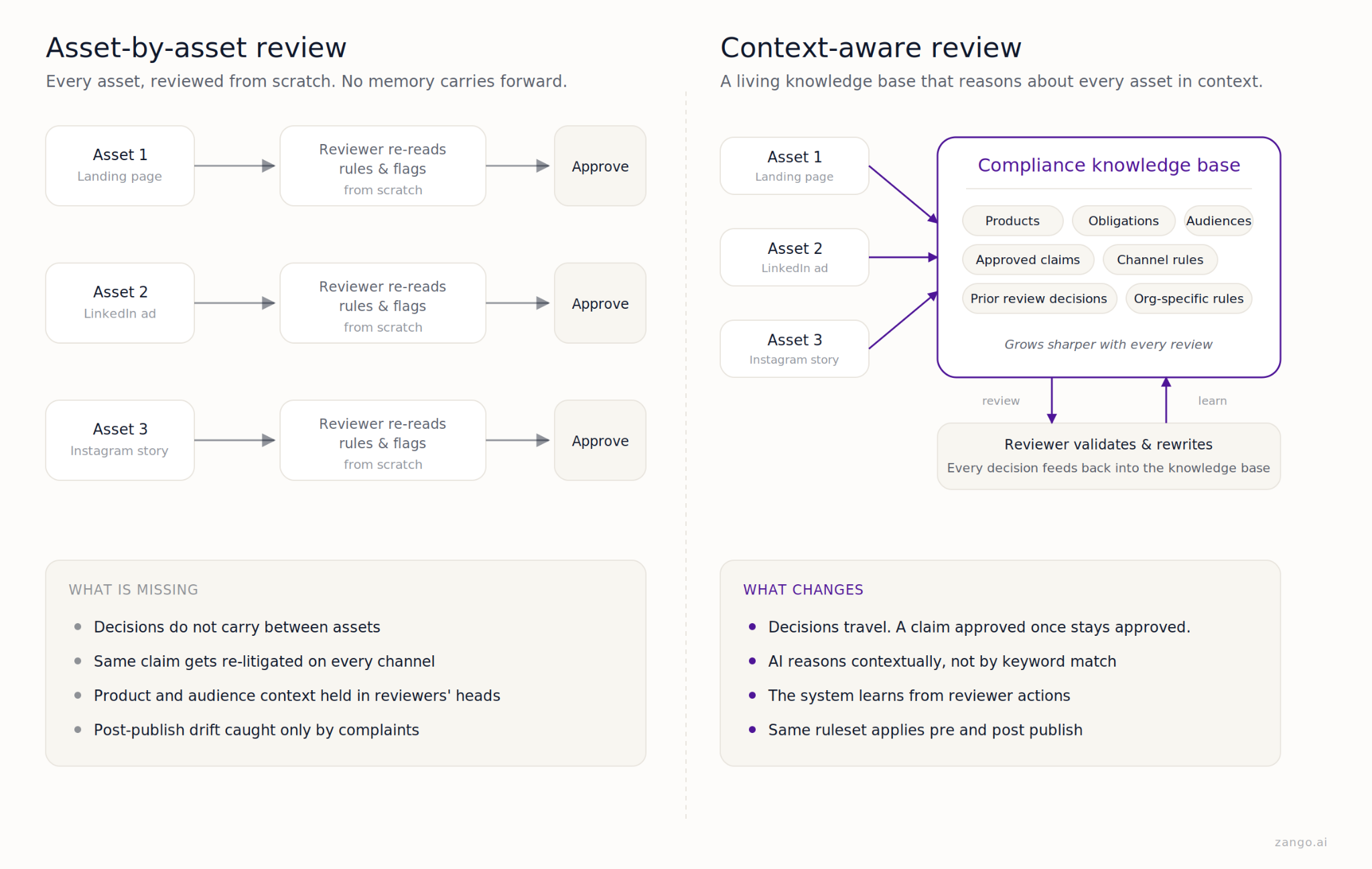

- Volume is part of it. But the bigger issue is that asset-by-asset review has no memory. The same disclaimers, risk qualifiers, and claim formats get worked through again on every new campaign, by reviewers who have seen them plenty of times before.

- AI finally makes a different model viable: a compliance knowledge base that holds your products, obligations, approved claims, channel rules, and prior review decisions, and reasons about every new asset against all of it.

- Zango AI's Marketing Compliance plugs live regulatory intelligence into that knowledge base. Every asset gets reviewed in context. The audit trail is there by default.

The reality of marketing compliance today

Marketing compliance in financial services covers a bigger surface area than most people outside compliance or marketing realise. In the UK, the rules start with FSMA Section 21 and run through COBS 4 and PS23/6 for cryptoasset promotions, with Consumer Duty now sitting on top of all of it. In the EU, the Unfair Commercial Practices Directive and MiCA marketing rules complicate the picture.

The scale of regulator activity has shifted sharply. In 2021, the FCA intervened on 573 non-compliant financial promotions. By 2024, that figure was 19,766, a 34-fold jump in three years. The FCA has also opened a new front against finfluencers, running an international operation across nine jurisdictions in 2025 that resulted in arrests, warning alerts, and over 650 takedown requests across social platforms.

Meanwhile the marketing surface keeps expanding. A single campaign might live across a landing page, paid search, LinkedIn ads, Instagram stories, a newsletter, an affiliate’s blog, and an in-product prompt, each with its own compliance texture.

The workflow for handling this looks roughly the same everywhere: marketing puts a draft together, a ticket goes to compliance, a reviewer flags what needs changing, rework happens, the asset goes live. Somewhere in a SharePoint folder sit exported PDFs and Slack screenshots as the audit trail.

This has worked for years, when campaigns involved just one or two assets. But nowadays, it is no longer fit for purpose. Time for a rethink.

The real problem: asset-by-asset review has no memory

The conversation usually centres on volume and back-and-forth. Too many assets, too many rework loops. Those are real issues, but they are symptoms.

The underlying issue is structural. In most institutions, marketing compliance runs one asset at a time. A reviewer opens an asset, recalls the applicable rules, writes feedback, closes the ticket. Next asset, start over. The person who spent an hour working out the right risk qualifier for a savings campaign has to do the same thinking the next day when that claim turns up on LinkedIn. The workflow does not remember.

The knock-on effects do not show up in a review time metric. A claim approved on a landing page quietly drifts on a social post because the reviewer is working from scratch. A disclaimer approved for a UK audience gets copy-pasted into a DACH campaign where the wording no longer fits. A post-publish edit removes a risk warning that was the entire reason a page got approved. Nobody notices for four months.

There is no mechanism to save institutional memory or ensure consistency across reviews. In a domain this subjective, that matters. The same claim can be approved one week and questioned the next. A judgement call made in one market gets copy-pasted into another where it no longer holds. The result changes according to who’s reviewing.

There is a longer chain that asset-by-asset review never touches: product to obligation to approved claim to disclaimer to channel rule to prior decision. That chain is where the real work of marketing compliance lives. In most firms, it lives in the heads of two or three senior reviewers. When those people are on leave, things slow down. When they leave the firm, something harder to fix happens.

Getting faster at reviewing individual assets does not close this gap. Hiring more reviewers does not close it either. The gap is the absence of institutional memory in the review process itself.

What AI has made possible (and what it has not)

Early AI in Financial Promotions (finprom) was mostly keyword work that would scan for banned terms like “guaranteed returns” and flag missing mandatory warnings. Useful, but it never helped with reasoning. Finprom is not a keyword problem. You can write an ad using every safe word in the playbook and still mislead your reader, because meaning lives in context.

Large language models moved that ceiling. A model can now read an ad the way a reviewer does. Ask whether “earn up to 8% on your balance” is balanced against the risk, and a model will flag the phrasing as reading like a yield guarantee without a prominent qualifier. Show it a disclaimer buried in six-point grey, and it will flag the prominence issue, not just the presence of the text.

On its own, though, it still leaves the work running asset by asset. The shift that matters more is memory. Give an AI system access to the firm’s product taxonomy, its obligations, its library of approved claims, the channel-specific rules compliance has built up, and the full history of prior review decisions, and something different happens.

The difference is between “this ad does not contain the phrase ‘capital at risk’” (a text match) and “this is a UK retail cryptoasset ad under PS23/6, your firm has previously settled on this specific risk warning format for this product, and the phrasing ‘earn up to’ has been flagged and rewritten three times in the last quarter because it reads as a yield guarantee in context.”

One is a checklist result. The other is a judgement grounded in the firm’s own compliance history.

Two caveats. First, LLMs hallucinate. They produce outputs that read confidently and are sometimes just wrong. Human review is not a stopgap until models improve; it is a structural part of any serious deployment, because the consequential calls in Finprom have to rest with someone who can be held accountable.

Second, an AI system is only as good as the context it has to work with. A general-purpose model evaluating ads against a generic rulebook will miss most of what your senior reviewer would catch. The context is the product.

How Zango AI approaches this

When we started building Marketing Compliance at Zango AI, the instinct from the first few conversations was to make a smarter pre-publish scanner. What we found, working with the first few clients, was that the scanner was the easy half.

The hard problem was the memory one. Every team we worked with had reviewers holding institutional context in their heads that the tool had no way to see. So we inverted the architecture. The scanner became downstream. The knowledge base became the product.

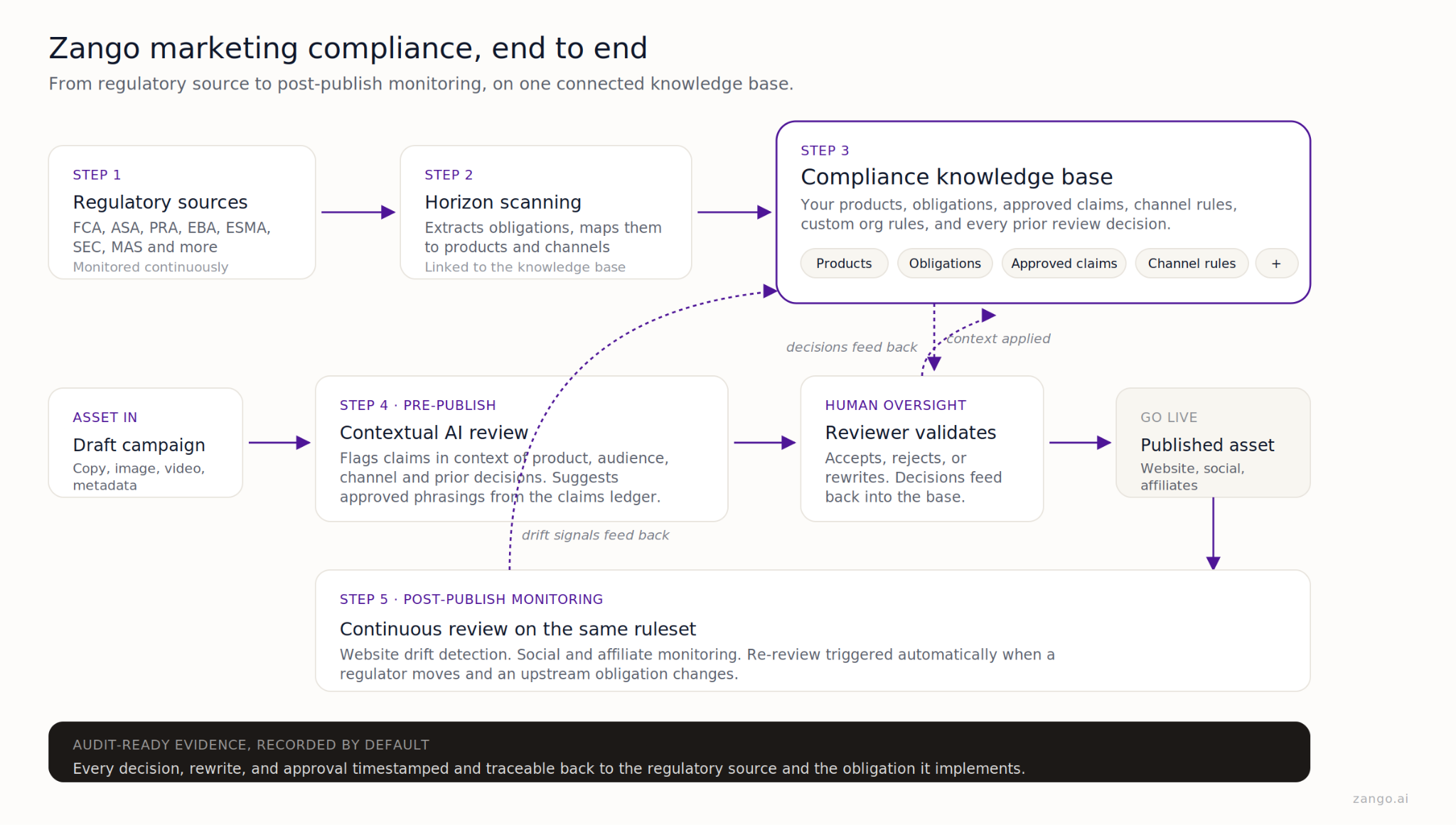

Product-aware context. Before any asset is reviewed, the system already knows what it promotes, which jurisdiction it targets, which channel it runs on, and who the audience is. A yield claim on a UK retail crypto ad gets evaluated against different rules than the same claim on a German institutional product page.

Connected to the regulatory source. Horizon scanning monitors hundreds of sources. When the FCA updates its social media guidance or the ASA publishes a ruling that moves the standard, the change flows into the obligations register and into the marketing knowledge base wherever relevant. Live assets approved under an obligation that has since moved get flagged for re-review.

Your own rules, alongside the regulatory ones. Every firm has internal standards that go beyond the regulatory baseline, such as brand guidelines, a claims ledger of approved phrasings, risk qualifiers settled after one too many arguments. Zango holds all of this as first-class rules, not free-text notes in a reviewer’s sidebar.

Contextual review, not keyword matching. The AI reads the claim, works out what the firm is actually saying, and evaluates whether the statement is fair, clear, and appropriately qualified for this product and this audience. Two ads can use identical words and end up with different outcomes, because context changes what those words mean in practice.

Reviewer decisions train the base. When a senior reviewer rewrites “guaranteed yield” to “variable rate, subject to market conditions” and cites COBS 4.5.2, Zango captures the rewrite, the rule reference, and the product context. Two weeks later, when the same phrasing appears on a different product, the AI surfaces the earlier rewrite as a suggested fix.

One ruleset, pre and post publish. The same knowledge base runs over drafts before they go out and over live assets after. If an affiliate edits a landing page six months later and removes an APR disclosure, drift detection spots it using the same rule the original pre-publish review applied.

Audit-ready evidence, by default. Every decision, rewrite, and approval is timestamped and tied back to the obligation it implements. When the FCA asks how you handled a specific claim on your TikTok presence in October, the answer takes minutes.

Three questions worth asking

If you want a read on whether your marketing compliance setup is fit for purpose, three questions usually surface the state of things.

When your team signs off a specific claim or disclaimer, does that sign-off travel to the next asset automatically? Or does the next reviewer reason it through from scratch? If the answer depends on who happens to be reviewing, your institutional knowledge is in people’s heads, not your infrastructure.

If the regulator or board called tomorrow and asked how you handled a specific claim across your website, social, and affiliate content over the last twelve months, could you pull up a clean, timestamped record? Or does someone have to dig through email threads and chats to reconstruct what happened?

And the most important one. Is your marketing compliance function producing intelligence, or approvals? There is a world of difference between the two. Approvals are a throughput metric. Intelligence is a control. A team producing approvals signs off whatever crosses its desk. A team producing intelligence knows, at any moment, which live assets are now exposed because a regulatory change happened, which claims have drifted on social media, which patterns are starting to look systemic. Where your team sits on that spectrum says a lot about whether marketing compliance is treated as a strategic control, or as a queue.

Zango is the AI compliance layer for financial institutions. Marketing Compliance connects live regulatory intelligence to your products, claims, and channels. Every asset gets reviewed in the context of what your firm has already decided, with audit-ready evidence recorded by default. Speak with our team to see how context-aware review works for your organisation.

Sources & further reading

- FCA financial promotions data 2024 (FCA)

- FCA outcomes and metrics 2022–2025 (FCA)

- FCA leads international crackdown on illegal finfluencers, June 2025 (FCA)

- COBS 4 Communicating with clients, including financial promotions (FCA Handbook)

- FSMA Section 21 Financial promotion (UK Legislation)

- PS23/6 Financial promotion rules for cryptoassets (FCA)

- ASA CAP Code Section 3 Misleading advertising (ASA)

Note: This post is for information only and does not constitute legal advice. Always apply your firm's policies and seek counsel where appropriate.

.png)